shared responsibility model

What is a shared responsibility model?

A shared responsibility model is a cloud security framework that dictates the security obligations of a cloud computing provider and its users to ensure accountability.

When an enterprise runs and manages its own IT infrastructure on premises, within its own data center, the enterprise -- and its IT staff, managers and employees -- is responsible for the security of that infrastructure, as well as the applications and data that run on it. When an organization moves to a public cloud computing model, it hands off some, but not all, of these IT security responsibilities to its cloud provider. Each party -- the cloud provider and cloud user -- is accountable for different aspects of security and must work together to ensure full coverage.

While the responsibility for security in a public cloud is shared between the provider and the customer, it's important to understand how the responsibilities are distributed depending on the provider and the specific cloud model.

Different types of shared responsibility models

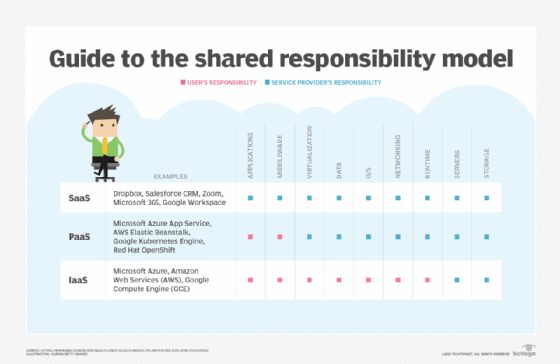

The type of cloud service model -- infrastructure as a service (IaaS), platform as a service (PaaS) and software as a service (SaaS) -- dictates who is responsible for which security tasks. According to the Cloud Standards Customer Council, an advocacy group for cloud users, users' responsibilities generally increase as they move from SaaS to PaaS to IaaS.

- IaaS. The cloud provider is responsible for services and storage -- the basic cloud infrastructure components such as virtualization layer, disks and networks. The provider is also responsible for the physical security of the data centers that house its infrastructure. IaaS users, on the other hand, are generally responsible for the security of the OS and software stack required to run their applications, as well as their data.

- PaaS. When the provider supplies a more comprehensive platform, the provider assumes greater responsibility that extends to the platform applications and OSes. For example, the provider ensures that user subscriptions and login credentials are secure, but the user is still responsible for the security of any code or data -- or other content -- produced on the platform.

- SaaS. The provider is responsible for almost every aspect of security, from the underlying infrastructure to the service application, such as an HR or finance tool, to the data the application produces. Users still bear some security responsibilities such as protecting login credentials from phishing or social engineering attacks.

Pros and cons of a shared responsibility model

Although cloud computing is a well-established technology, the concept of shared responsibility remains daunting and potentially confusing -- largely because cloud computing has only reached broad acceptance over the last few years. As with most technologies, there are tradeoffs to consider. The benefits are easy to see, such as the following:

- Ease of use. With shared responsibility, the provider shoulders much of the security responsibility for the infrastructure -- relieving that traditional responsibility from computing users. This shortens the list of things users must worry about and can make shared responsibility tasks quicker and easier.

- Solid expertise. Cloud providers devote substantial resources and expertise to infrastructure security, and they are typically quite good at it. This can be a significant benefit for small-to-mid-sized organizations that might lack in-house security expertise.

Still, any cloud user must consider a series of potential risks or disadvantages in a shared responsibility model, including the following:

- Trust. Users must be able to trust that cloud providers are delivering on their security responsibilities. This can be difficult for large businesses with sensitive data -- and impossible for some types of businesses.

- Knowledge. For users to tackle their part of shared responsibility, they must possess a deep and detailed understanding of the provider's tools, resources and configuration settings to ensure that workloads and data running within the cloud's infrastructure are properly secured -- such as using encryption.

- Changes. Changes happen, and users must understand any changes to the providers' infrastructure or services -- such as API updates -- so that configurations and settings are kept properly secured.

- Differences. The basic idea of shared responsibility is straightforward, but the actual lines of demarcation can be different depending on the provider and the specific shared responsibility model. Customers should read and understand the fine print for each provider to ensure that users fulfill all of their specific responsibilities.

- Consequences. Simply putting workloads and data in a cloud doesn't relieve the business of the consequences of hacks or data breaches. Provider service-level agreements (SLAs) routinely hold a provider harmless for any security oversights or errors.

The customer's typical cloud security responsibilities

In general terms, a cloud customer is always responsible for configurations and settings that are under their direct control, including the following:

- Data. A user must ensure that any data created on or uploaded to the cloud is properly secured. This can include the user's creation of authorizations to access the data, as well as the use of encryption to protect the data from unauthorized access.

- Applications. If a user placed a workload into a cloud VM, the user is still fully responsible for securing that workload. This can include creating secure (hardened) code through proper design, testing and patching; configuring and maintaining proper identity and access management (IAM); and securing any integrations -- the security of connected systems such as local databases or other workloads.

- Credentials. Users control the IAM environment such as login mechanisms, single sign-on, certificates, encryption keys, passwords and any multifactor authentication items.

- Configurations. The process of provisioning a cloud environment includes a significant amount of user control through configuration settings. Any cloud instances must be configured in a secure manner using the provider's tools and options.

- Outside connections. Beyond the cloud, the user is still responsible for anything in the business that connects to the cloud such as traditional local data center infrastructure and applications.

The provider's typical cloud security responsibilities

Public clouds present a vast and complex infrastructure, and cloud providers will always be completely responsible for that infrastructure, including the following components:

- Physical layer. The provider manages and protects the elements of its physical infrastructure. This includes servers, storage, network gear and other hardware as well as facilities. An infrastructure typically includes various resilient architectures such as redundancy and failover, as well as redundant power and network carrier connectivity. Infrastructure management also frequently includes backup, restoration and disaster recovery implementations.

- Virtualization layer. Public clouds are fundamentally do-it-yourself environments where users can provision and use as many resources and services as they wish. But such flexibility demands a high level of virtualization, automation and orchestration within the provider's infrastructure. The provider is responsible for implementing and maintaining this virtualization/abstraction layer as well as its various APIs, which serve as the means of user access and interaction with the infrastructure.

- Provider services. Cloud providers typically offer a range of dedicated or pre-built services such as databases, caches, firewalls, serverless computing, machine learning and big data processing. These pre-built services can be provisioned and used by customers but are completely implemented and managed by the cloud providers -- including any OSes and applications needed to run those services.

Divided cloud security responsibilities

Although many security responsibilities have clear delineations, there are some responsibilities that might be unclear or changeable depending on the service or provider. Users must pay particular attention to provider SLAs and understand the lines of responsibility precisely in the following areas:

- Native vs. third party. The one who builds a service is responsible for it. For example, if a cloud customer uses a database offered by a cloud provider, the provider is responsible for deploying, managing, maintaining and updating that service -- though the customer is still responsible for managing and securing any data generated or accessed by that service. However, if a cloud user deploys a database as a workload in a cloud instance, the user is responsible for managing and running that application and its data -- the provider is just responsible for the infrastructure and virtualization layer.

- Server-based vs. serverless computing. If a cloud user selects a traditional server-based VM, the user is responsible for OS selection, workload deployment and any associated security/configuration settings. If a cloud user selects a serverless (event-based) computing option, the user is responsible for the code uploaded to the service, as well as any user security/configuration options provided through the control plane.

- Network controls. Consider a network service such as a firewall. Regardless of whether the user deploys the firewall or uses a provider's firewall service, the user is responsible for setting the firewall rules and ensuring that the firewall is configured properly to guard the user's associated applications or other network elements.

- OSes. Whether a user brings their own OS or deploys an OS supplied by a provider, the user generally gets to decide which OS to use, and this decision brings a host of other security issues. The user is responsible for ensuring that the OS is properly configured with appropriate security settings and adequately patched for security requirements.

For more on public cloud, read the following articles:

8 key characteristics of cloud computing

Public vs. private vs. hybrid cloud: Key differences defined

Choose the right on-premises-to-cloud migration method

Breaking Down the Cost of Cloud Computing

Top 10 cloud computing careers of 2023 and how to get started

Top 23 cloud computing skills to boost your career

Cloud networking vs. cloud computing: What's the difference?

Notable shared responsibility model examples

The rule of thumb for shared responsibility is that "if it belongs to you or you can touch it, you're responsible for it." This generally means that a cloud provider is responsible for securing the parts of the cloud that it directly controls, such as hardware, networks, services and facilities that run cloud resources. At the same time, a user is generally responsible for securing anything that they create within the cloud, such as the configuration of a cloud workload, selected services and infrastructure involved in the desired cloud environment. But the actual line isn't always clear and varies depending on the cloud model and provider, as in the examples below:

- AWS, a major IaaS provider, explains its shared responsibility model as users being responsible for security in the cloud -- including their data -- while AWS is responsible for the security of the cloud, meaning the compute, storage and networks that support the AWS public cloud.

- Microsoft Azure is similar, noting that users own their data and identities. Users are responsible for protecting their data and identities, on-premises resources and any cloud components that users control -- which can vary by service. But users are essentially responsible for data, endpoints, accounts and access management.

- Google adopts a similar posture, and generally divides responsibility categories into content, access policies, usage, deployment, web app security, identity, operations, access and authentication, network security, guest OS data, networking, storage and encryption, and audit logging.

Although the wording might be similar, users must understand the details of the shared responsibility model that apply to each specific cloud provider. This ensures that no aspect of security is accidentally overlooked, leaving vital business workloads and data exposed.

Best practices for shared responsibility cloud security

Cloud security typically involves an array of resources and services that might require some level of security intervention from both cloud providers and users. Although it's impossible to describe proper security measures for every possible circumstance, there are several best practices that can help to foster better security, such as the following:

- Understand SLAs. Because user responsibilities differ depending on cloud service model and provider, there is no standard shared responsibility model. To understand their cloud security responsibilities, users should reference the SLAs they have with their providers. Every cloud provider uses a master SLA, and many services and resources will likely include a separate SLA. Users must understand the security responsibilities for every resource and service included in their architected infrastructure. This can help avoid assumptions and misunderstandings that might leave security gaps or vulnerabilities.

- Focus on data. Cloud users are universally responsible for their data in the cloud, so they must ensure proper policies for data security. Many organizations classify and categorize data, and then implement security measures that are appropriate for each respective category -- using stricter security measures for more sensitive data.

- Focus on credentials. Cloud users are universally responsible for credentials in the cloud -- that is, defining who has access to what cloud resources, services and data. Combined with the sheer number of resources and services available in a public cloud, IAM complexity can become overwhelming. Use the tools offered by providers to help manage IAM and develop policies and processes to use those tools properly and consistently.

- Watch communication. Pay particular attention to communication and update notes from the cloud provider. Even seemingly mundane communication can include vital notifications of service updates and changes that can affect security responsibilities, such as API updates or patches to existing services.

- Consider tools. Some cloud users can benefit from cloud management tools designed to distill complex cloud environments into easier-to-digest dashboards and alert for cloud security issues. Tools can provide automated correction for undesired security changes. For example, if a user makes a storage instance public, a tool can potentially detect the change, send alerts and automatically make corrections according to established policy without human intervention.