How VPC networking changes affect AWS Lambda

AWS' old approach to connecting an enterprise's VPC to Lambda was inefficient and added complexity. Learn how the updated model attempts to address those shortcomings.

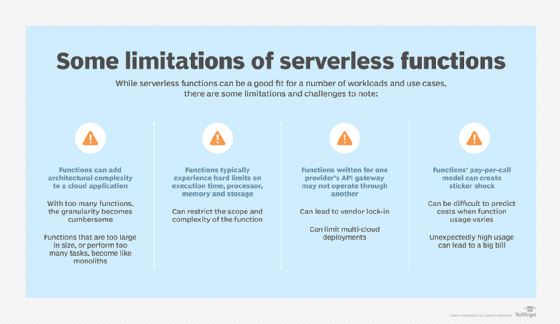

AWS Lambda functions can significantly reduce an organization's infrastructure footprint and management overhead, all while providing faster scalability in response to changing workloads. However, Lambda has some downsides due to its design and the ways AWS operates the service.

These shortcomings include variable startup times with cold starts, as well as language and capacity limitations. AWS recently addressed one notable drawback to Lambda: restrictions on access to other AWS resources over isolated virtual networks.

We'll review the details of this change to the Amazon VPC networking below and how it could affect enterprises' serverless workloads. But, first, it's essential to understand the problems AWS needed to solve in the first place.

Lambda networking basics and previous limitations

When organizations start to use AWS for production workloads, they invariably want to use VPCs to partition resources into distinct networks, each with different network and security policies. This is typically done with one VPC for specific application resources and perhaps another VPC for shared databases and storage that serve multiple applications. Users have complete control over VPC addressing, traffic routing, firewall rules and other security settings.

To further this complexity, AWS runs and operates Lambda resources in a separate VPC, which it controls. Until recently, the only default access was via a public HTTPS interface using Lambda APIs. AWS initially addressed users' need to connect Lambda functions to their other resources through an elastic network interface (ENI) that linked Lambda and customer VPCs. When configured this way, Lambda functions still executed in the AWS environment, but they could only access resources in an enterprise's VPC over the ENI.

This model got complicated if an organization wanted to connect Lambda functions to multiple VPCs, because it created a separate ENI for each function-to-service connection. The relationship wasn't necessarily one-to-one, but it resulted in multiple ENIs for each function.

The original scheme was highly inefficient and created several problems:

- It increased cold-start time for new functions.

- It used up private address space for each ENI.

- It counted against the limits on ENIs in a region.

- It had the potential to trigger API rate limits on creating new ENIs.

What the VPC networking changes mean for Lambda

To fix the simplistic, suboptimal proliferation of Lambda ENIs, AWS borrowed a technique it uses for the Network Load Balancer (NLB) and NAT Gateway -- AWS Hyperplane. This internal AWS offering is an obscure technology in the plumbing of VPCs that acts as a distributed packet forwarder that connects incoming VPC flows to the correct destination IP or port pair. Hyperplane is a mature technology that supports multiple AWS offerings, including NLB and Elastic File System. It can handle millions of connections per section, with submillisecond latency.

AWS extended Lambda networking to use a shared Hyperplane ENI that enables every Lambda function in an account to access multiple VPCs through a single ENI. Every time a new Lambda execution environment is created, it establishes a tunnel to the existing Hyperplane ENI, which then forwards traffic to the appropriate VPC.

If a particular Lambda-to-VPC networking connection is used simultaneously by several workloads, it reuses the existing connection, which further improves efficiency and performance. Hyperplane ENIs are linked to a particular security group-subnet combination in an AWS account. As a result, functions in the same account with the same security group-subnet pairing use the same interface.

Benefits of the improved system

Lambda's new VPC networking system is easier to use and provides faster scaling and lower latency. It's now the default setting for VPCs, requires no additional configuration and is being gradually integrated across all AWS regions. As of September 2019, it was available to all accounts in U.S. East (Ohio), EU (Frankfurt) and Asia Pacific (Tokyo) regions.

The new VPC networking technology doesn't affect any external properties of Lambda networks. Users still must do the following:

- configure Identity and Access Management permissions to enable Lambda to create and delete VPC interfaces;

- configure subnet and security parameters for VPC interfaces; and

- use a NAT interface like the VPC NAT Gateway or VPC endpoint to allow functions to access services outside of your VPCs.

As noted before, AWS automatically sets up the new Hyperplane ENI when a Lambda function is first created or when the VPC configuration is changed. This process can take as long as 90 seconds to complete. If the Lambda function is invoked during this period, the function will execute, but the cold start time will be longer than usual.